No computer system is infallible. When something goes wrong, syslogs are often the first place to look at while diagnosing the issue. With systems growing more and more complex, analyzing millions of log entries has become a daunting task. In this post, we'll use Python together with pattern recognition and data visualisation techniques to understand logs better.

The concept of log analysis is as old as log gathering itself. Log files are a rich source of valuable information about

computer systems, be it a simple desktop app, a website handling millions of requests every hour, or an industrial robot

assembling car parts. Most systems produce log entries constantly, with a rate dependent on their complexity, software

practices, and other factors. Entries of different levels (such as WARN, ERROR, INFO, DEBUG and more) are

appended to special log files throughout the whole life cycle of an application.

Log files are usually rotated, often with the oldest ones compressed and backed up so that they're available if a need for investigating old logs arises in the future. Due to the frequency of creating new entries, a log file from a single day may well have millions of lines, amounting to a couple of gigabytes or more. With this gargantuan amount of information, finding logs pointing to a specific failure in the system, not to mention keeping an eye on any sights of potential future errors, is a challenging task.

Putting pattern recognition methods in action can make large volumes of logs understandable. Together with information visualisation techniques, it is possible to create an easy-to-digest summary of log files too large to comprehend manually. Making logs more approachable reduces the time it takes to diagnose existing issues and makes it easier to spot any suspicious logs, which may cause trouble in the future.

What's with the logs

First things first. Let's look at the way logs are structured and the reasons you might have to work with them if you don't already.

Log entry structure

There are many standardized log formats (for instance the Common Log Format), but in most cases, developers are free to customize the form of logs gathered by their applications. Each system is distinct and works with different data, so the number of formats you might encounter is relatively high.

Throughout this post, we'll use AEM logs as an example. You can read more about the way AEM produces logs in the documentation. AEM makes it possible to configure things such as the logging level, details regarding the log files rotation, the logs format, and more. In AEM 6.5, the default logs format is:

{0,date,dd.MM.yyyy HH:mm:ss.SSS} *{4}* [{2}] {3} {5}You can check the meaning behind each section here. This format leads to log entries looking like this:

08.11.2020 22:18:14.702 *INFO* [oak-repository-executor-1] com.adobe.granite.repository Service [6672, [org.apache.jackrabbit.oak.api.jmx.SessionMBean]] ServiceEvent REGISTEREDAs you can see, AEM offers great flexibility when it comes to handling logs. To avoid confusion, we'll stick to its default format throughout this post.

Deluged with logs

Single log entries look manageable. The format is pretty clear and the actual log message is usually pretty informative. The problem arises when you take a step back and look at a set of logs as a whole. There are many occasions when you might find yourself scrolling through lengthy log files, just to name a few:

- Debugging. Probably the most common case. The system is acting up or you have a report of some bug from the past. If you know a rough timestamp of the erroneous event - feel lucky, you should be able to pinpoint some relevant logs fairly easily.

- Daily system checkups. Depending on the way you maintain your live platforms, you may have a team of people responsible for scanning log files on a daily basis, in search of any suspicious entries. Most people find such tasks very mundane. On top of that, it's easy to miss something important and the sheer amount of data one has to go through is very discouraging. Yuck.

- Assessing the current status of a platform. Among other metrics, such as CPU usage or incoming traffic, logs can tell a lot about the processing that goes under the hood at any moment. Based on the contents of log files, it is possible to gauge whether a system is doing some heavy lifting, is frozen, etc.

What tools are there

Knowing that digging into logs is inevitable, it's good to know the tools you might want to use to make the process as painless as possible.

Manual

Let's start with the most primitive solution first. Locate your log file, say error.log - most probably it is pretty

heavy. Although it's a text file, I wouldn't recommend opening it using any standard GUI notepad. Some might try to load

a long file and crash in the process, while some can't handle files heavier than a certain threshold by design.

The easiest way of investigating the file is using some CLI programs. There are many options, but less seems like a

reasonable one to choose as it offers many handy commands and can handle large files smoothly since it doesn't load the

whole file while starting up (unlike vi). To run it, simply execute:

$ less error.logNow, depending on what you want to do, you might just scroll through the file using arrow keys, search for a phrase by

typing /phrase-to-find, go to the N-th percent of the file by typing Np, and much more. Check out the

manual if you want to make the most of less.

Dedicated

Now, let's look at the tools designed specifically for working with logs. There are both open source and commercial options available. Most tools offer some kind of log aggregation, meaning they can consolidate logs from discrete systems. Another must-have functionality is an advanced filtering system. Often, some basic methods of data visualisation are incorporated, such as bar charts, heat maps, and more. They make it easier to spot a sudden surge in the number of logs or to notice some correlations between errors.

Some of the most popular tools are (listed without any particular order):

Naturally, the list is not complete as there are many more products available on the market. The best thing to do is making your own research and deciding for yourself which one suits your needs best.

Discover patterns, visualise them

So far, we've mentioned some utilities making collecting and analysing logs easier. Even though they undoubtedly make the task of finding information less painful, they don't solve all the issues. In this post, we'll use Python to create a highly customisable solution, which may go a step beyond what standard log analysers provide out of the box. By using pattern recognition together with Natural Language Processing, we're going to give our analysis a strong boost. We'll use free, open source libraries to develop an application working outside any arbitrary log analyser, thus bringing benefit regardless of whether you use any aforementioned tools or not.

The plan is to organize logs by clustering individual entries and visualise the clusters on a plot in such a manner, as to pack as much information about the logs as possible on a single 2d image.

For demo purposes, we'll use an anonymized set of single-day logs coming from a live AEM environment. We'll deal with

ERROR level logs only, as they are usually the ones we are most interested in. We won't analyze other kinds of logs,

but the principle behind the methods presented still holds and they will work for different logs as well.

First, let's extract the logs we're interested in to a separate file.

$ grep -F "*ERROR*" error.log > errors-only.logLet's verify the first five entries, just to make sure we haven't messed anything up.

$ head -5 errors-only.log

05.10.2020 00:00:01.757 *ERROR* [sling-default-4599-HourlySnapshotJob] org.apache.sling.commons.scheduler.impl.QuartzScheduler Exception during job execution of job 'com.day.cq.reporting.impl.snapshots.SnapshotServiceImpl$2@386e6422' with name 'HourlySnapshotJob' : The query read or traversed more than 100000 nodes. To avoid affecting other tasks, processing was stopped.

05.10.2020 00:25:55.983 *ERROR* [OsgiInstallerImpl] org.apache.jackrabbit.vault.packaging.registry.impl.JcrPackageRegistry Stream is not a content package. Missing 'jcr_root'.

05.10.2020 00:26:07.685 *ERROR* [qtp134819915-439] org.apache.sling.engine.impl.SlingHttpContext handleSecurity: AuthenticationSupport service missing. Cannot authenticate request.

05.10.2020 00:26:07.686 *ERROR* [qtp134819915-439] org.apache.sling.engine.impl.SlingHttpContext handleSecurity: Possible reason is missing Repository service. Check AuthenticationSupport dependencies.

05.10.2020 00:26:09.537 *ERROR* [qtp134819915-440] org.apache.sling.engine.impl.SlingHttpContext handleSecurity: AuthenticationSupport service missing. Cannot authenticate request.Looking good. We can already see that there are three similar entries, two of them identical, save for the timestamps (third and fifth logs). Now, let's check how many entries are there in total.

$ cat errors-only.log | wc -l

637More than six hundred. Not great, not terrible. Diligently going through them all line by line would probably take about 15-30 minutes, depending on the number of duplicate entries.

Clustering

Let's see what we can do to organize the logs into easy-to-read groups. As each action of saving something to the log

file has to be explicitly programmed, there is a finite number of possible message formats in each log file. The things

that might be different are the entry details - things like the exact timestamp, IP addresses, variable values etc. If

we could cluster massive log files into groups of entries having the same origin (the same log.error() line in the

code), we could greatly reduce the amount of information to take in. This way we end up with a nice summary, directly

saying how many logs of what type have occurred.

The topic of automatic log analysis is nothing new. Multiple libraries and scientific papers dealing with this problem exist. Most of them employ clustering in an endeavour to detect abnormal logs. In our case, we're less interested in spotting anomalous logs than we are in clustering itself (however, detecting suspicious entries might be a by-product).

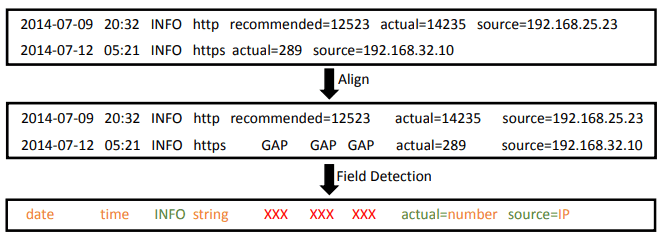

In this post, we'll use the LogMine framework presented in the paper LogMine: Fast Pattern Recognition for Log Analytics. We won't go into much detail here, if you're interested in the inner workings of the method, I encourage you to read the full paper. For our purposes, it's enough to know the principle behind the algorithm - it aligns individual entries and uses a special distance metric to gauge the similarity between them. If logs are similar enough (based on a parameter value), they're added to the same cluster. The intuition behind the algorithm is depicted in the figure below.

The image also visualises an important feature of LogMine - the ability to specify variables that are to be replaced by

tokens such as date, time, etc. It's very useful if you know the format of variable fields beforehand.

Fortunately, the algorithm has been implemented and shared on GitHub by Tony Dinh. You can find the repository here. The tool is extremely easy to install and use. Let's use it on our data and see the first five clusters found.

$ logmine errors-only.log | head -5

308 05.10.2020 09:47:13.699 *ERROR* [10.65.0.254 [1601884032080] GET /content/company/companycom/en-gb/home/utilities/broadband-and-tv/cheap-broadband.html HTTP/1.1] com.company.components.document.AnalyticsModel Error while parsing date

174 --- --- *ERROR* --- --- GET --- HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource --- type=nt:unstructured, --- --- --- ---

40 02.10.2020 09:12:50.079 *ERROR* [sling-oak-observation-5743] com.day.cq.wcm.core.impl.event.RepositoryChangeEventListener Exception during event handling of page modifications: null

36 02.10.2020 00:00:01.770 *ERROR* [sling-default-1143-HourlySnapshotJob] org.apache.sling.commons.scheduler.impl.QuartzScheduler Exception during job execution of job 'com.day.cq.reporting.impl.snapshots.SnapshotServiceImpl$2@386e6422' with name 'HourlySnapshotJob' : The query read or traversed more than 100000 nodes. To avoid affecting other tasks, processing was stopped.

14 05.10.2020 00:26:20.684 *ERROR* [FelixStartLevel] doc-app-core [com.skynet.eureka.doc.renderer.DocRendererProvider(3503)] Field configs in component class com.skynet.eureka.doc.renderer.DocRendererProvider must be declared volatile to handle a dynamic referenceNice! The first number in each line is the cluster size and is followed by a cluster representation. We can see that we already know much more about the contents of our file. In this case, the majority of errors result from an incorrectly parsed date. The output of the method is already very informative, but it could be improved by adjusting the parameters of the method and making some small changes to the tool itself.

Since there are some changes to the code I wanted to introduce to make the solution fit my needs even more, I forked the repository and made some modifications to the codebase. The forked repository is available here. The list of changes is pretty short and includes:

- Changing the way variables (such as

date) are substituted (link). In the original project, variables work on tokenized log entries (split by whitespace), but I needed to have variables spanning multiple tokens. With this change, it is possible to substitute05.10.2020 00:00:01.757withtimestampinstead of defining two separate variables (dateandtime). - Storing original logs constituting the clusters (link). The original project doesn't save the individual logs, just the cluster representation and the number of entries. However, we'll want to know the details of each cluster to get the full picture.

- Saving the processing result to a JSON file (link). We'll use the output of the algorithm for further analysis.

To install the forked repository, go to the relase on

GitHub, download the logmine-0.3.0-py3-none-any.whl file and install it using pip.

$ pip install logmine-0.3.0-py3-none-any.whlNow it's time to run logmine once again, this time with more parameters to make the result as good as possible.

$ logmine errors-only.log \

-i 1 \

-m 0.2 \

-v \

'<timestamp>:/\d{2}\.\d{2}\.\d{4} \d{2}:\d{2}:\d{2}\.\d{3}/' \

'<IP>:/\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3}/' \

'<URL>:/(https?:\/\/(?:www\.|(?!www))[a-zA-Z0-9][a-zA-Z0-9-]+[a-zA-Z0-9]\.[^\s]{2,}|www\.[a-zA-Z0-9][a-zA-Z0-9-]+[a-zA-Z0-9]\.[^\s]{2,}|https?:\/\/(?:www\.|(?!www))[a-zA-Z0-9]+\.[^\s]{2,}|www\.[a-zA-Z0-9]+\.[^\s]{2,})/' \

'<path>:/(?<!HTTP)\/[^\s,\]]+/' \

'<instance_id>:/@[\d\w]{2,}/' \

'<number>:/\d{2,}/' \

-o result.json \

| head -5

308 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] com.company.components.document.AnalyticsModel Error while parsing date

158 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource JcrNodeResource, type=nt:unstructured, superType=null, path=<path>

40 <timestamp> *ERROR* [sling-oak-observation-<number>] com.day.cq.wcm.core.impl.event.RepositoryChangeEventListener Exception during event handling of page modifications: null

39 <timestamp> *ERROR* [sling-default-<number>-HourlySnapshotJob] org.apache.sling.commons.scheduler.impl.QuartzScheduler Exception during job execution of job 'com.day.cq.reporting.impl.snapshots.SnapshotServiceImpl$2<instance_id>' with name 'HourlySnapshotJob' : The query read or traversed more than <number> nodes. To avoid affecting other tasks, processing was stopped.

17 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource FilteringResourceWrapper, type=nt:unstructured, path=<path>, resource=[MergedResource [path=<path>, resources=[Ljava.lang.String;<instance_id>]]We use -i 1 to specify the minimal cluster size, -m 0.2 to increase cluster granularity (yielding more, less general

clusters), and -o result.json to save the outcome into the result.json file. Furthermore, we specify multiple regex

variables after -v. These are used to substitute timestamp, IP addresses, and more variable data with placeholders to

enhance the clustering performance. Thanks to specifying variables, the collected clusters are much more concise.

Data visualisation

By running the clustering algorithm, we've greatly reduced the volume of information to digest to understand the contents of our log file. However, the number of clusters can still be significant - it's 30 in this case. With a little effort, we can go a step further and visualise the clusters in a meaningful way.

If we look closer at the output we've received, we can spot that some clusters are more similar than others. For example, check out the entries below.

14 <timestamp> *ERROR* [FelixStartLevel] doc-app-core [com.skynet.eureka.doc.renderer.DocRendererProvider(<number>)] Field configs in component class com.skynet.eureka.doc.renderer.DocRendererProvider must be declared volatile to handle a dynamic reference

5 <timestamp> *ERROR* [FelixStartLevel] doc-app-core [com.skynet.eureka.doc.component.select.datasource.DataSourceProvider(<number>)] Field providers in component class com.skynet.eureka.doc.component.select.datasource.DataSourceProvider has unsupported type java.util.Map. It must be one of java.util.Collection or java.util.List.

1 <timestamp> *ERROR* [FelixStartLevel] com.skynet.aem.parsysediting [com.skynet.aem.parsysediting.core.RenderingScriptProviderRegister(<number>)] Field providers in component class com.skynet.aem.parsysediting.core.RenderingScriptProviderRegister has unsupported type java.util.Map

1 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> javax.net.ssl.SSLHandshakeException: Received fatal alert: handshake_failure

1 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> java.net.NoRouteToHostException: No route to host (Host unreachable)We can see that the first three are related and the same goes for the last two. Furthermore, we can divide the first

trio even more - the similarity between the second and the third one is greater than between the first one and any of

the remaining two. The second and the third one both contain has unsupported type java.util.Map phrase, but the

difference between them is large enough for LogMine not to merge these clusters.

It would make sense to visualise this similarity. We want to somehow keep these two clusters together, signifying that there is a relation between them. We'll use Natural Language Processing and data visualisation techniques to make it possible.

First, let's import all the libraries and methods we'll need. We'll use json to load the results from LogMine, numpy

to facilitate working with arrays, matplotlib for plotting data, re and replaceall for dealing with texts, and,

finally, scikit-learn to use data visualisation techniques.

import json

import re

import numpy as np

import matplotlib.pyplot as plt

from replaceall import replaceall

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.manifold import MDS

from sklearn.metrics.pairwise import cosine_similarity

from sklearn.preprocessing import MinMaxScalerLet's start by loading the results from the JSON file we've created before. We'll extract the clusters and sort them in descending order by the number of individual log entries in each cluster.

with open('result.json') as file:

data = json.loads(file.read())

clusters = sorted(data['clusters'], key=lambda cluster: cluster['count'], reverse=True)Now, let's perform some basic preprocessing on the patterns, it'll make the job easier for the methods used later by

uncluttering the data. We'll remove all the variables together with --- placeholders (their meaning is different for

each cluster - we don't want them to be interpreted as the same token), and some parenthesis. Additionally, we'll

replace certain characters with spaces to divide some phrases into multiple words (e.g. com.company.MyClass into

com company MyClass). Lastly, we want to get rid of unneeded whitespaces and make the whole patterns lowercase (XYZ

and xyz should be treated equally).

to_remove = ['<timestamp>', '<IP>', '<URL>', '<path>', '<instance_id>', '<number>', '---', '[', ']', '(', ')']

to_replace_with_space = ['.', ',', '=']

patterns = [cluster['pattern'] for cluster in clusters]

patterns_preprocessed = [replaceall(pattern, to_remove, '') for pattern in patterns]

patterns_preprocessed = [replaceall(pattern, to_replace_with_space, ' ') for pattern in patterns_preprocessed]

patterns_preprocessed = [re.sub(r'\s+', ' ', pattern).strip().lower() for pattern in patterns_preprocessed]After having all cluster patterns preprocessed, we can start measuring similarities. To this end, we first need to vectorize the patterns, which are currently in a text format. We'll use TFIDF (term frequency-inverse document frequency) vectorization, a common method of transforming a set of texts into vectors. The method substitutes each word in a text with its numeric representation. TFIDF uses two metrics - term frequency and inverse document frequency. Their product constitutes the final representation of each word. If you want to learn more, check out this blogpost.

tfidf = TfidfVectorizer().fit_transform(patterns)The next step is calculating the similarities between vectors. We'll use the cosine similarity measure. It takes two vectors and calculates a dot product of their normalized representations, yielding a number between 0 and 1. The higher the number, the greater the similarity.

cosine_similarities = cosine_similarity(tfidf, tfidf)As a result, we end up with a matrix of pattern similarities. In our case, this is a 30x30 matrix, as there are 30 clusters in the data. The flow from raw patterns to similarities matrix is presented in the diagram below. The example depicted is extremely simple as it shows the process for only two logs.

The end result is a matrix showing the similarities. The diagonal of the matrix is filled with ones, as each entry is identical to itself. In this example case, the similarity between the first and the second log (the same as the similarity between the second and the first) is equal to 0.09.

Now, how can we easily see which clusters are similar to each other if we have a high number of clusters in the data? The simplest solution would be to pick the cluster we want to investigate and print, for instance, top 5 clusters most similar to it. Not a bad strategy, but we could do better and visualise the similarity for all the clusters simultaneously.

This is where multidimensional scaling comes into play. MDS, for short, is a term encompassing various means of visualising the level of similarity Given a distance matrix with the distances between each pair of objects in a set, and a chosen number of dimensions, N, an MDS algorithm places each object into N-dimensional space such that the between-object distances are preserved as well as possible.

We'll use the classical MDS in our case, called PCoA (Principal Coordinates Analysis). We won't go into detail here, but I highly encourage you to read up on these techniques if you feel interested. However, full understanding of the inner workings of the method is not necessary to use it, often a basic intuition about the idea is good enough. Especially, having in mind that PCoA is not trivial, even for people with a good grasp of linear algebra.

Fortunately, we don't have to implement MDS ourselves as it's already included in the scikit library. We'll use it to

reduce each vector to 2 dimensions by passing the precomputed similarities matrix as an argument. Take notice that the

MDS algorithm implemented in scikit-learn expects a dissimilarities matrix, so we'll have to convert the

similarities into dissimilarities.

cosine_dissimilarities = 1 - cosine_similarities

mds = MDS(n_components=2, dissimilarity='precomputed')

reduced_patterns = mds.fit_transform(cosine_dissimilarities)Now we have a two-dimensional representation of each cluster. This can be visualised on a plot!

We'll treat 2d patterns representation as x,y coordinates on a plot. This way, each cluster becomes a single point on a plot. The distance between any two points will be proportional to the similarity between corresponding clusters. To spice things up a bit, in addition to position, we'll also use the plot to convey information about the cluster size. The bigger the point, the more logs are in the cluster. We'll also add some transparency to the points - the more opaque any point is, the bigger the number of logs in the cluster. This way, the most numerous clusters will stick out by being both bigger and brighter.

Let's move on to preparing the data we'll visualise. We'll extract the number of logs in each cluster and based on that

calculate sizes and colors of our points on a plot. Values behind colors variable will represent alpha channel values.

The bigger the value, the more opaque an object will be, with zero meaning complete transparency.

count = np.array([log['count'] for log in clusters])

count_norm = (count - np.min(count)) / np.max(count)

sizes = count_norm * 2900 + 100

colors = count_norm * 0.75 + 0.25

data = zip(sizes, colors, reduced_patterns)We want to have a way of specifying the range of possible sizes and colors (we don't want the points to have size 0 or

be completely transparent). To this end, we first create count_norm variable, which is a normalized version of the

count vector, meaning its smallest element is equal to 0 and the largest equal to 1. The normalized array is used to

calculate sizes and colors with a desired range. This is done by multiplying the vector by the desired span and adding

an offset to it. In this case, we limit the sizes to [100, 3000] range, and colors to [0.25, 1].

Now, let's use matplotlib library to visualise the end result.

plt.figure(figsize=(10, 5))

plt.axis('off')

for i, (size, color, position) in enumerate(data):

plt.scatter(position[0], position[1], s=size, alpha=color, c='orange')

plt.annotate(i, xy=position)

plt.show()We iterate through all clusters and add them to the plot. Additionally, we annotate each point with a cluster index, so we can trace the clusters back and check the individual logs constituting them.

Let's look at the final plot.

The plot shows all 30 clusters, indexed from 0 to 29. In total, more than six hundred log entries are represented. It takes no more than a second to identify the clusters with the highest amount of logs - 0 and 1. These are respectively:

308 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] com.company.components.document.AnalyticsModel Error while parsing dateand

158 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource JcrNodeResource, type=nt:unstructured, superType=null, path=<path>Looking at the plot, it's fairly easy to spot some dense groups of points as well. Let's analyse some of them in more detail. I've selected three example groups to go over.

Let's look into the first one. It contains the following clusters:

3 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> java.net.UnknownHostException: --- Name or service not known

3 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> --- ---

3 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> org.apache.http.conn.HttpHostConnectException: Connect to --- --- failed: Connection timed out (Connection timed out)

1 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> javax.net.ssl.SSLHandshakeException: Received fatal alert: handshake_failure

1 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> java.net.NoRouteToHostException: No route to host (Host unreachable)

1 <timestamp> *ERROR* [sling-default-<number>-com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask.<number>] com.day.cq.rewriter.linkchecker.impl.LinkCheckerTask Failed to validate URL <URL> javax.net.ssl.SSLHandshakeException: sun.security.validator.ValidatorException: PKIX path building failed: sun.security.provider.certpath.SunCertPathBuilderException: unable to find valid certification path to requested targetThey're very related, no doubt about that. All of them have been produced by a LinkCheckerTask, which failed to

validate some URLs. However, each entry is slightly different, so it makes sense not to merge them all together - it's

possible they have different root causes and should be treated as separate issues.

Now, let's go to the second group:

158 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource JcrNodeResource, type=nt:unstructured, superType=null, path=<path>

17 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.servlets.get.impl.DefaultGetServlet No renderer for extension html, cannot render resource FilteringResourceWrapper, type=nt:unstructured, path=<path>, resource=[MergedResource [path=<path>, resources=[Ljava.lang.String;<instance_id>]]Both entries are about resource rendering issues, but the resources are different - JcrNodeResource and

FilteringResourceWrapper. Again, very similar, but by no means the same.

Finally, the third group:

1 <timestamp> *ERROR* [FelixStartLevel] com.company [com.company.publication.articlelist.foundation.template.RootPathProvider(<number>)] Field providers in component class com.company.publication.articlelist.foundation.template.RootPathProvider has unsupported type java.util.Map

1 <timestamp> *ERROR* [FelixStartLevel] com.company [com.company.publication.articlelist.foundation.template.TemplateProvider(<number>)] Field categoryToProvider in component class com.company.publication.articlelist.foundation.template.TemplateProvider has unsupported type java.util.MapAgain, the entries are closely related.

So far, we've analysed clusters close to each other. Let's take some points which are far apart and see whether they differ or not. Take a look at clusters 8 and 16. These are respectively:

5 <timestamp> *ERROR* [FelixStartLevel] --- --- Field --- in component class --- has unsupported type --- It must be one of java.util.Collection or java.util.List.and

1 <timestamp> *ERROR* [<IP> [<number>] GET <path> HTTP/1.1] org.apache.sling.engine.impl.SlingRequestProcessorImpl service: Uncaught ThrowableApart from some parts which are common to all logs (things like timestamps or the *ERROR* phrase), it's hard to see

any similarity. This corroborates our story - the clusters are unrelated, so they are far apart on the plot.

That's it! By using pattern recognition algorithms together with data visualisation techniques we created a simple representation of 637 log entries. Understanding what's happening in logs with the help of a cluster plot takes a fraction of the time needed to through the log file line by line. No data is lost in the process, and individual log entries are still accessible.

There is still a lot of room for improvement in the presented framework, starting with tuning LogMine parameters, changing the preprocessing pipeline, or using a different distance metric (for instance the Euclidean one). Additionally, making the plot interactive would greatly improve the experience of analysing the data.

Summary

In this post, we've used Python together with some pattern recognition algorithms and basic data visualisation tools to extract useful information from a large number of log entries. The techniques presented can aid humans in understanding logs better and faster, thus directly impacting the performance of QA and dev teams.